The overdiagnosis dilemma

An evolving headwind for medical diagnostics ventures

In AI, data is everything. The hottest tech sector thrives on massive datasets—from Photobucket, now selling image data for training purposes, to marketplaces like Defined.ai connecting content owners with buyers in fields like audio, medical records, and even crime scene data. Google’s recent $60 million deal with Reddit is just one example of the demand for rich corpuses of content.

But is there such a thing as too much data? After all, we're smart enough to distinguish meaningful information from mere noise. Right?

In contrast to the insatiable hunger for ones and zeroes in AI, the medical diagnostics sector has a small but growing problem with an excess of information stemming from overdiagnosis.

Overdiagnosis refers to the detection of conditions that will never cause symptoms or death, or when the detection itself provides no net benefit to mortality or quality of life. This results in unnecessary treatments, ballooning costs, and patient anxiety.

To be clear, overdiagnosis is not misdiagnosis, nor does it arise because our instruments of disease detection make errors. It’s is not a mistake per se, but emerges from a failure to consider the downstream intrinsic harms associated with diagnosis. It creates information that the patient thinks is useful, but instead launches a cascade of further medical intervention to address a problem that will never materialize.

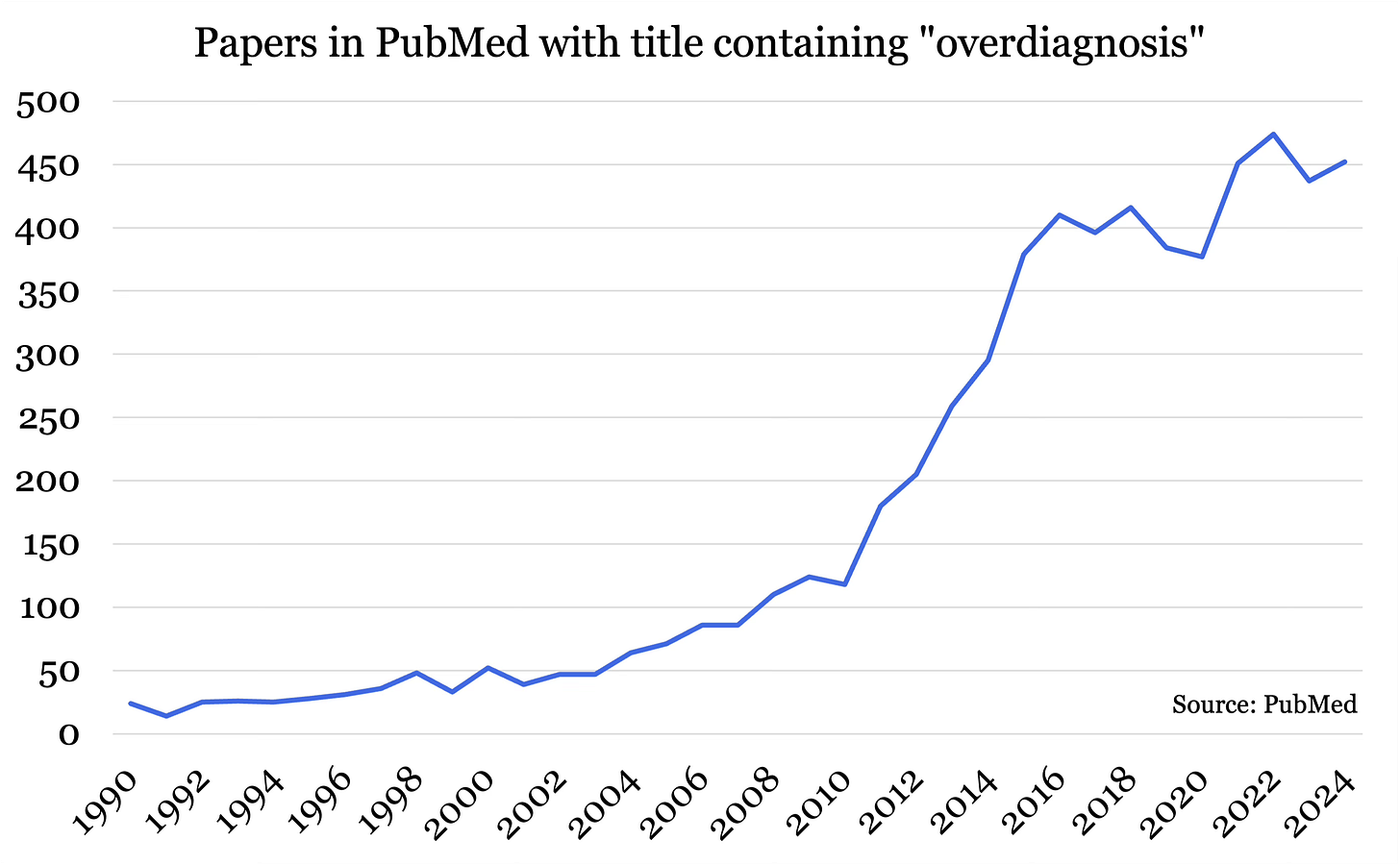

And it’s gaining attention.

Low value care and overtreatment are estimated to cost the US healthcare system up to $100B per year, and healthcare advocates are taking notice. The Choosing Wisely Campaign, working with over 80 medical societies in the US, identifies unnecessary tests and procedures and initiates de-implementation campaigns.

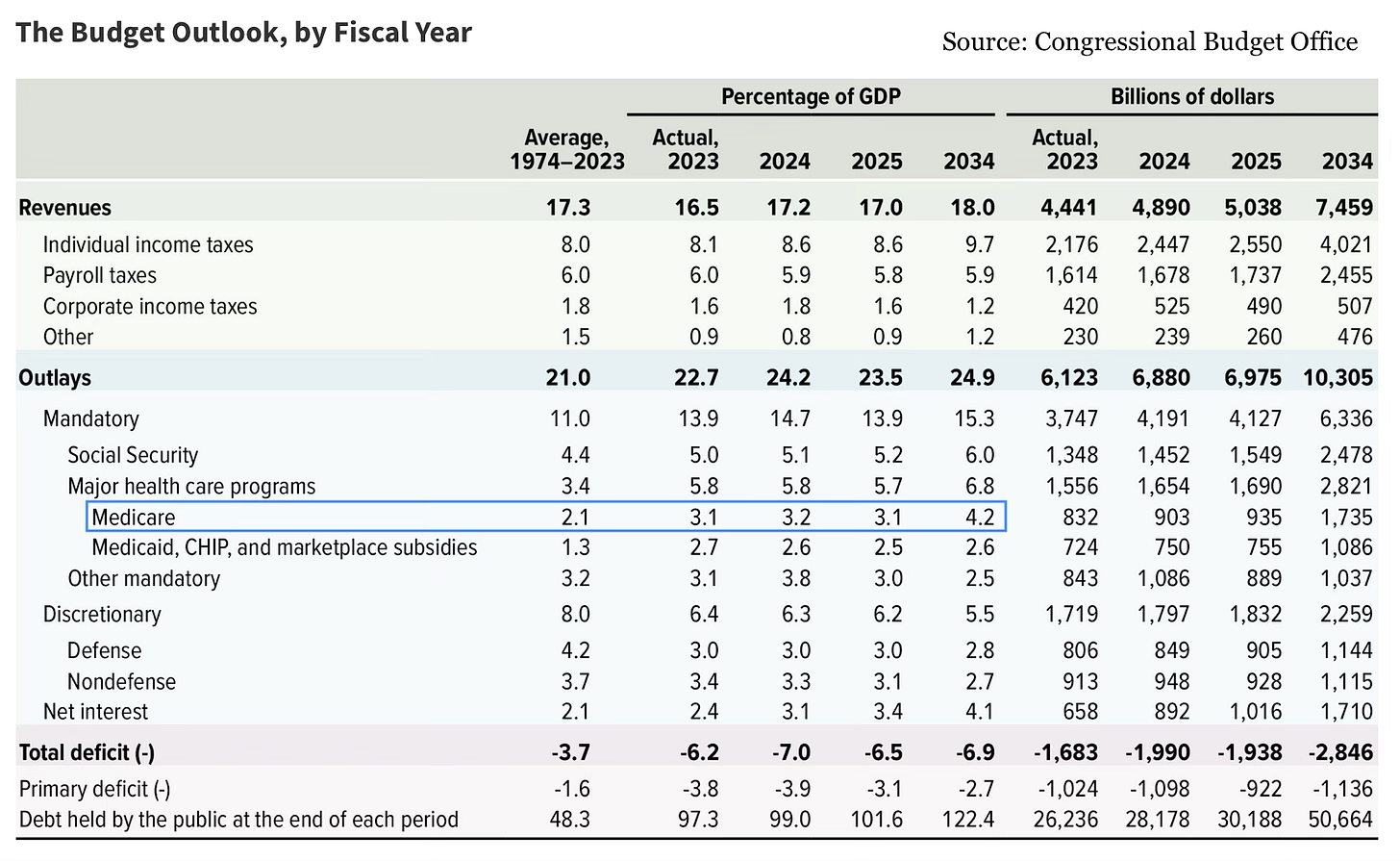

As a percentage of GDP, Medicare outlays are projected to increase more than any other major expenditure category.

With the federal budget top of mind for many following election season, efforts to curb wasteful spending are gaining steam. Overdiagnosis and the cascade of expensive procedures that follow are prime targets for cuts.

The questions I’m exploring today are:

How does overdiagnosis occur and in what areas?

What implications does overdiagnosis have for companies innovating in diagnostics?

How medical screening goes awry: a case study

Here is an example of how good intentions and apparently sound logic can have a negative impact on health economics and patient outcomes.

Imagine you're a healthcare expert focusing on Disease X, a rare condition affecting about 1 in every 100,000 people. In a population of 100 million, we would expect approximately 1,000 individuals to have the disease. Because of its low prevalence, screening for Disease X isn’t standard. Disease X is curable if caught early, costing only $5,000 per patient. If left undiagnosed, however, the disease becomes incurable, leading to long-term care costs of $1 million per patient.

Under the current standard, only 20 people undergo confirmatory testing annually, and around 10 cases are caught and cured at $5,000 each, while the remaining 990 require costly long-term care. The total cost breakdown looks like this:

Confirmatory Testing: 20 tests × $2,000 = $40,000

Cure Costs: 10 cases × $5,000 = $50,000

Long-Term Care: 990 cases × $1 million = $990 million

Total Cost: ~$990 million

This huge expense mostly arises from long-term care due to missed diagnoses. In fact, the testing costs are negligible.

As a solution, you propose more widespread screening, thinking it will save money by catching Disease X early. You develop a new, highly accurate test with 99% specificity and 100% sensitivity, costing just $1 per test. The math seems promising:

Screening: 100 million tests × $1 = $100 million

Confirmatory Testing: 1,000 × $2,000 = $2 million

Cure Costs: 1,000 × $5,000 = $5 million

Long-Term Care: $0 (!)

Total Cost: $107 million

This strategy would drop costs by 9.2x, a full order-of-magnitude reduction, despite rolling our testing to the entire population!

But this is where the math of rare disease turns against you.

A 99% specificity implies a 1% false positive rate. Therefore 1% of all individuals taking the test will show a positive. In a population of 100 million, this results in 1 million positives, almost all of those false, each requiring a $2,000 confirmatory test. The actual costs are:

Screening: 100 million tests × $1 = $100 million

Confirmatory Testing: 1 million × $2,000 = $2 billion

Cure Costs: 1,000 × $5,000 = $5 million

Long-Term Care: $0

Total Cost: ~$2.1 billion

Oops.

While beneficial for patients with Disease X, this approach doubles the overall cost due to the massive number of false positives requiring confirmatory testing, despite having a test boasting 99% specificity. In a healthcare system with limited resources, allocating an extra $1 billion for a disease affecting only a small fraction of the population will be challenging to justify.

Consider further if 1% of patients receiving a confirmatory test develop an infection requiring hospitalization, say from biopsy complication. Now an additional 10,000 patients end up in the hospital as a result of your screening initiative.

The mortality or quality of life benefit won’t be much to write home about. The bottom line is that increased testing — which is intuitively always a good thing — both increased costs and yielded poorer patient outcomes.

Overdiagnosis in prevalent diseases

The case above has its shortcomings, of course, and really this kind of result is only possible numerically if you have a very rare disease. You could summarize it simply by stating that the positive predictive value (PPV) of the test was abysmal. One million patients tested positive, yet only 1,000 had the disease — a PPV of 0.1%.

In other words, a positive result generated by this test means that the patient in question only has a 0.1% chance of having the disease.

The overdiagnosis movement is also gaining traction for prevalent diseases and ailments, including:

ADHD. Although it’s not clear to what degree, ADHD seems to be overdiagnosed, especially with milder forms of the disorder.

Many cancers. While diagnoses of skin, thyroid, kidney, and prostate cancers have skyrocketed on a per capita basis over the last 50 years, death rates have remained largely stable, perhaps indicating that the spike is more attributable to a change in the definition of the disease rather than a rise in disease prevalence. Up to 40% of individuals, if subject to a torso and neck scan, can reveal “incidentalomas”, benign tumours that can nonetheless trigger an avalanche of anxiety and follow-on care.

Hypertension. The standard “120/80” criteria may be unnecessarily diagnosing about 100 million Americans with high blood pressure, despite no increased risk of heart disease.

In these cases, it’s the disease criteria itself that may unnecessarily medicalize up to half the American population. Although well-intentioned, the disease criteria is set to be more of an industrial fishing trawler net than a harpoon gun, where even a remote chance of developing the disease is lumped into the disease itself.

Ray Moynihan, a professor at Bond University summarized this phenomenon in that “increasingly we’ve come to regard simply being ‘at risk’ of future disease as being a disease in its own right.”

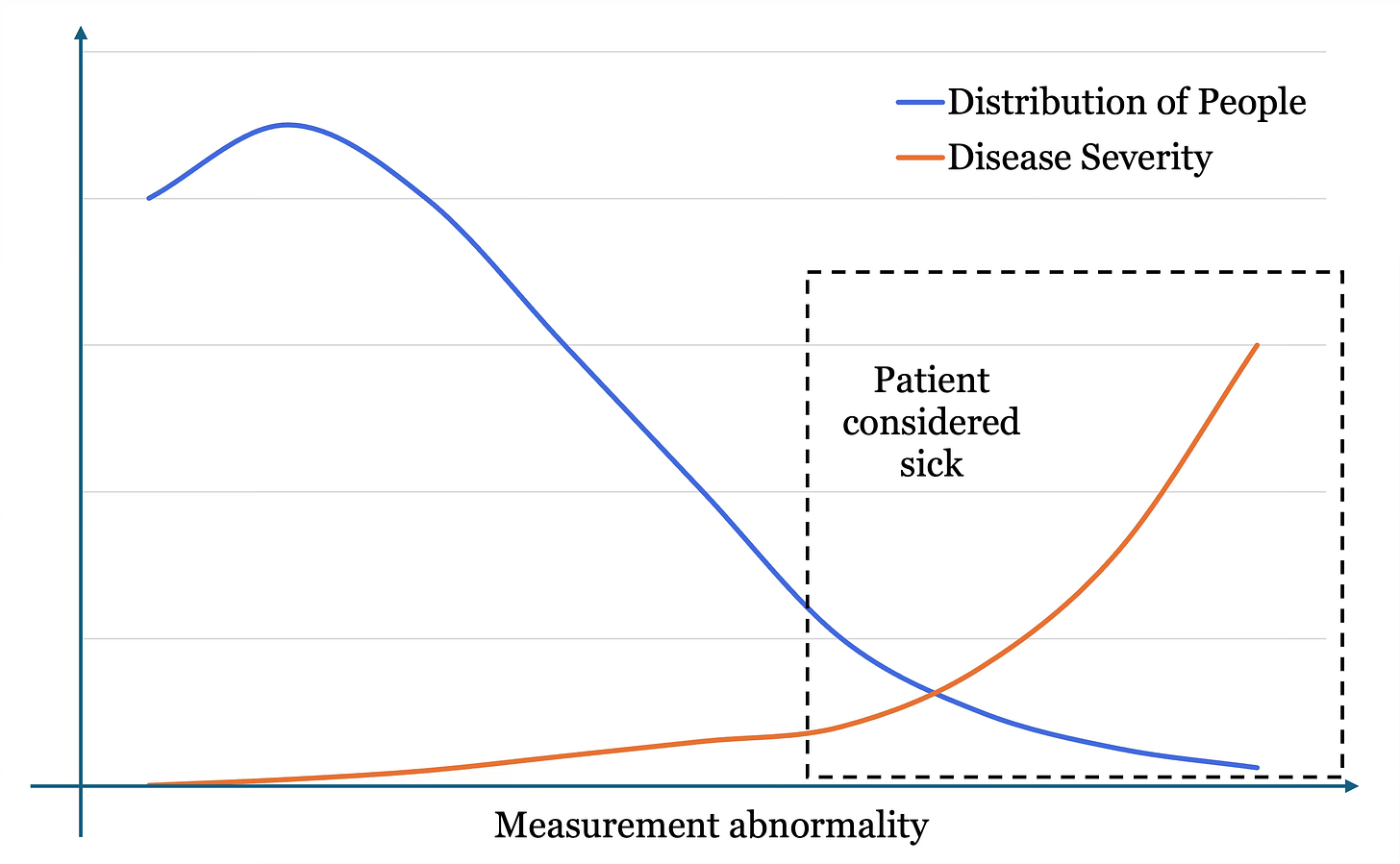

On the x-axis is measurement abnormality, or how far out of range the analyte is. In the context of blood pressure, the origin of the graph might read 120/80 and the far right as 180/120. The blue line represents the proportion of the population at each measurement level. Most people are slightly hypertensive, and then fewer and fewer people have abnormally high BP. The orange line represents the severity of the disease with increasing measurement. A BP of 120/80 is considered healthy. A BP of 180/120 has extremely high severity and likelihood of mortality and complications.

The issue with our disease categorization is that everyone in the dashed box is considered to have the disease. This includes individuals in the left of the box, which crucially is also where most people are, as per the space below the blue line at that location versus the right of the box. So this large amount of individuals gets lumped in with the rest, despite measurements that are not so far out of range with minimal severity. When many of these people are categorized as sick but would never develop symptoms and undergo a cascade of unnecessary follow-up procedures, this is overdiagnosis.

What does this mean for diagnostic innovators?

Firstly, none of this means that medical diagnosis is any less of a viable space to innovate, displace old systems, and boost value.

As a percent of healthcare spend, diagnosis has an outsized impact. McKinsey notes:

“Diagnostic tests are estimated to influence 60 to 70 percent of all treatment decisions, for example, yet account for only 5 percent of hospital costs and 2 percent of Medicare expenditures.”

When a small upfront fee has the potential to eliminate much larger downstream expenses, this is cost-saving leverage. Companies need to understand if their solutions have cost-saving leverage, and of what magnitude. Spending a dollar now to save $100 later will always resonate, regardless of industry. Unfortunately, it’s also the intuitive understanding of cost-saving leverage that leads to overdiagnosis in the first place.

Medical diagnostics is far from a “solved” field. There are many areas in which improved access to tests, discovery of novel biomarkers, or more sensitive instruments are desperately needed. Alzheimer’s is a notable case where irreversible damage may begin 20 years prior to symptom onset. Treatments are generally more effective the earlier they are administered, so a better method to detect risk for Alzheimer’s could be an effective in wide-scale prevention. Tuberculosis testing remains far too expensive for developing nations, despite pressure from activists for manufacturers to sell tests at cost.

Here are several considerations for companies to assess whether they’re likely to face headwinds from overdiagnosis or gain momentum from addressing true clinical needs:

Evaluate your true clinical significance

If your diagnostics startup pitch sounds like a big data play, consider drilling down into what clinical impact your solution will have. If the information you generate has negligible follow-up value, you don’t have a long-term winner.

23andMe learned this the hard way. Direct-to-consumer genomic testing generates insights at the most personal level of what makes you, you. But ultimately, their tests were limited in clinical utility and often required follow-up consultations for interpretation. Over time, and possibly at the behest of their physicians, customers noticed and revenue declined. Despite procuring a vast, unique, proprietary trove of health data, 23andMe’s entire board resigned when the CEO failed to put together a sufficient turn-around plan.

Binx Health is a counterexample. They manufacture a point-of-care platform for sexually transmitted infections (STIs) that operates within the doctor’s office, reducing time to results and directly addressing a public health need.

I’m a big fan of Binx’s easy-to-understand value proposition: When a patient gets an STI test, the samples typically need to be sent to a lab for analysis, with results returning several days or weeks later. Over 40% of the time following a positive test, the patient does not return to the clinic for treatment, potentially causing long-term reproductive issues in women and facilitating disease transmission. Binx has validated a key finding through extensive study: most people will wait in the doctor’s office for 30 minutes if they can get a result and prescription. At best, this could raise the treatment rate by 50%.

Doublecheck that the data you collect is actually needed in a medical context. Curiosity spending gets cut first. Medical spending gets cut last.

Anticipate the new doctor-consumer tension

The rise of accessible diagnostics has created a new dynamic between doctors and their patients.

Consumers demand convenient testing to feel empowered. They crave information about their physiology to take control of their health. On the other hand, doctors are increasingly mistrustful of third-party tests which may lack adequate validation or utility. Doctors may be dismissive of clinical evidence brought forth by a patient. Patients may feel like their doctor isn’t doing everything in their power to improve their health.

Diagnostic companies should anticipate this tension and consider whether they’re building primarily for the consumer or the physician. Ensuring clinical validity and gaining physician endorsement can strengthen credibility and acceptance.

Be mindful of de-implementation trends

Health systems are increasingly focused on de-implementing outdated or low-value procedures, and diagnostics innovators must be conscious of this shift. The Choosing Wisely campaign has pushed back against unnecessary testing, such as annual full-body MRIs or routine bloodwork in healthy individuals. Innovators need to be aware of these trends, especially if their tests target conditions that may soon see revised guidelines or criteria.

If your solution leverages a biomarker or procedure on the Choosing Wisely list, you’ll have an uphill battle persuading stakeholders of your odds of success.

Conclusions

People have a natural desire for more information, especially when it comes to their most value asset — health. The medtech industry responds by producing ever more diagnostic devices, initiatives, and services. Often this empowers the individual to take control of their health. But occasionally it cascades into needless anxiety and ballooning downstream costs.

Medical diagnostics ventures from start-ups to mega-cap corporations would do well to reevaluate the clinical relevancy of their solution, understand how this changes the physician-consumer dynamic, and deliver medical diagnostics solutions that continue to generate significant cost-savings leverage.